Paged Attention: Turning the Page on Transformer Memory

Paged Attention:

Turning the Page on

Transformer Memory

How ideas borrowed from operating systems - paging and virtual memory - revolutionize KV cache management for large-scale LLM serving.

Table of Contents

KV Cache and the Hidden Cost of Long-Context LLM Inference

Large Language Models based on the transformer architecture have become an integral part of modern systems, powering applications ranging from search and recommendation to conversational AI and code generation. Despite their success, serving these models efficiently at scale remains a major systems challenge. One of the most critical and often misunderstood bottlenecks is the management of the Key Value cache, commonly referred to as the KV cache.

This article is based on the paper "Efficient Memory Management for Large Language Model Serving with Paged Attention", which introduces a novel systems-level approach to managing the Key Value cache during transformer inference. The paper demonstrates how ideas borrowed from operating systems, such as paging and virtual memory, can be applied to attention mechanisms to significantly improve memory efficiency and throughput when serving large language models at scale.

What is KV Cache?

During transformer inference, the Key Value cache is a critical optimization that enables efficient token generation by storing the attention keys and values computed for previously processed tokens at every transformer layer. In a self attention mechanism, each token produces a query, key, and value vector, but only the query is specific to the current decoding step. The keys and values associated with past tokens remain invariant once computed and are reused for all subsequent steps. By caching these tensors, the model avoids recomputing attention states for the entire prefix at every iteration, reducing the per token computation from quadratic to linear complexity with respect to sequence length.

The KV cache grows monotonically as generation progresses and is maintained per request, per layer, and per attention head. Its memory footprint is therefore proportional to the number of layers, hidden dimension, sequence length, numerical precision, and batch size. While this design dramatically improves computational efficiency, it introduces a significant memory burden, often exceeding the size of the model weights themselves for long sequences or large batches.

For a more in depth look, check out my article on encoders.

Problem at hand

Transformers rely on self attention to allow each token to attend to all previously generated tokens. During inference, one fundamental limitation of the transformer model is that as you generate more and more text, you use up more and more memory. Eventually you reach a point where you run out of memory.

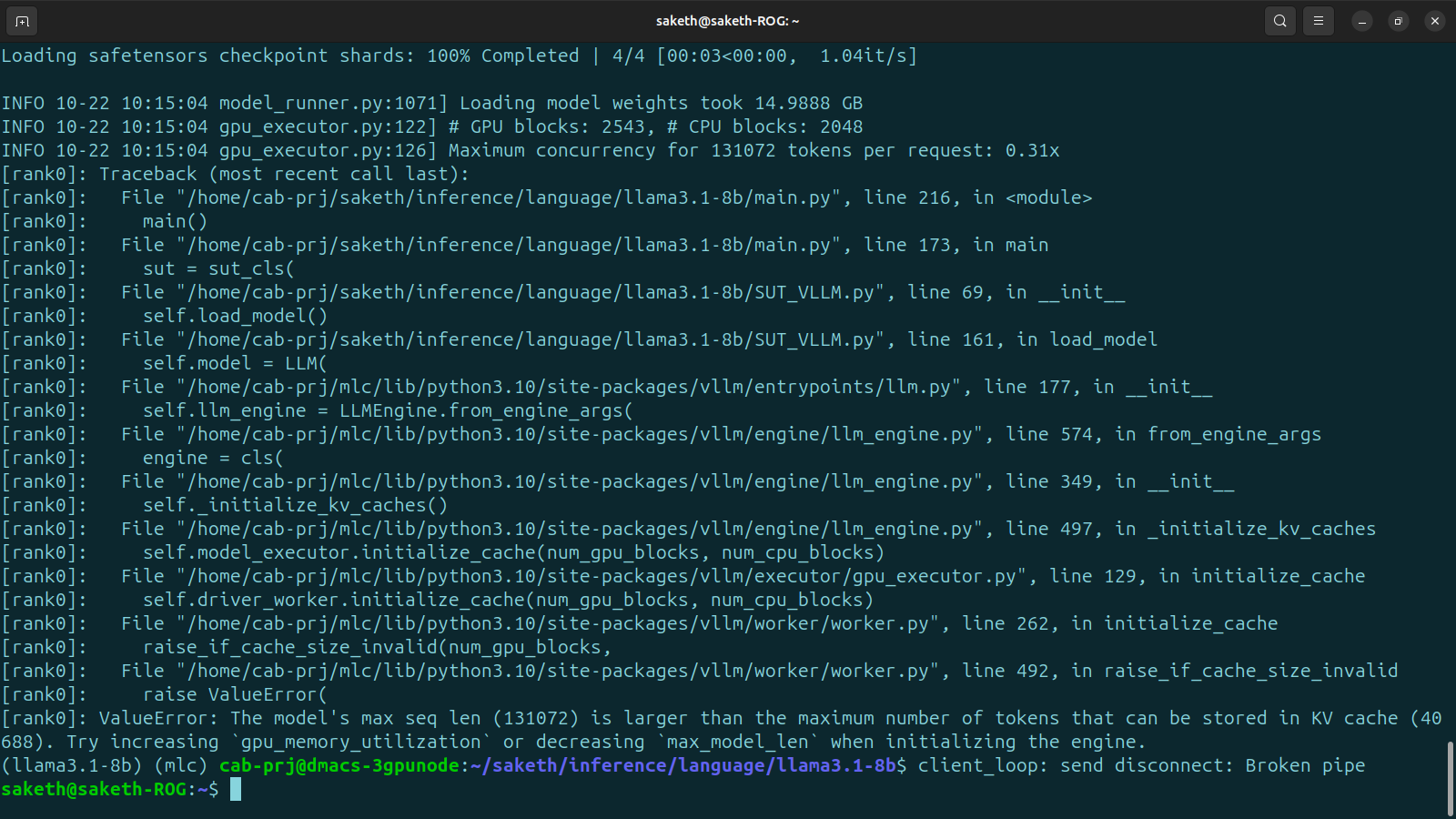

In Figure 2, we can see a real life example from my own work where I attempt to benchmark the LLaMA3.18b model on a NVIDIA RTX 4090 using MLPerf, but the 24GB VRAM wasn't sufficient for a sequence length of that size.

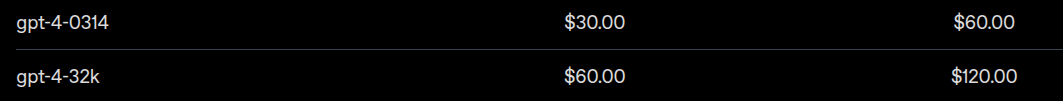

Looking at OpenAIs pricing model (Figure 3) you can notice something interesting. They charge you more (twice as much) for using the longer context model. One of the economic consequence of high memory usage when handling large context lenghts.

Once the Key and Value tensors are computed for a particular token in a sequence, they remain unchanged for the rest of the generation process, regardless of how many additional tokens are produced. In principle, this means the model should be able to reuse this information indefinitely. However, without an explicit caching mechanism, a transformer would be forced to recompute the Keys and Values for all previous tokens at every decoding step. This repeated computation constitutes a significant portion of the inference cost and quickly becomes prohibitively slow as sequence length grows.

An intuitive way to understand this is to imagine writing a document one word at a time. If, before writing each new word, you had to reread and mentally reconstruct everything you had written so far, progress would be extremely slow. A far more efficient approach is to remember what has already been written and focus only on adding the next word. The KV cache plays exactly this role in transformer inference.

The KV cache stores the attention Keys and Values that have already been computed for previous tokens. When the model generates a new token, it computes only the Key and Value vectors corresponding to that token and appends them to the existing cache.

While the KV cache significantly reduces computational overhead, it introduces a substantial memory cost. The memory required to store the cache can be approximated by the following expression:

- Factor of 2: Accounts for storing both the Key and Value tensors in the attention mechanism.

- Precision: Number of bytes used per parameter, for example 4 bytes for FP32 or 2 bytes for FP16.

- Number of layers (Number_Of_Layers): Determined by the transformer architecture and fixed for a given model.

- Model dimension (d_model): The embedding or hidden dimension defined by the model architecture.

- Sequence length (Seq_length): The number of tokens processed or generated, which varies with the input and output length.

- Batch size (Batch_size): The number of sequences processed in parallel during inference, determined by the workload and system capacity.

To illustrate the magnitude of this cost, consider the OPT-30B model. Using FP16 inference, with 48 transformer layers, a model dimension of 7168, a sequence length of 1024 tokens, and a batch size of 128, the KV cache alone requires approximately 180 GB of memory. In contrast, the model weights themselves occupy roughly 60 GB.

Paged Attention to the rescue

Serving large language models is expensive even on high-end GPUs such as the NVIDIA A100. Despite their computational power, a single GPU can handle only a limited number of requests per second. For example, with LLaMA-13B and moderately sized inputs, a single A100 typically achieves fewer than one request per second. As a result, production deployments require large GPU fleets to meet throughput demands, making cost and scalability persistent challenges for many organizations. This has been a critical pain point for many companies.

Now to understand what exactly the issue is here, lets take a look at the steps involved in inferencing (Figure 4).

- 1First the user provides an input (prompt) having multiple tokens.

- 2The prompt is processed by the model to generate the next token.

- 3The generated token is appended to the input sequence and fed back into the model.

- 4The model produces the subsequent token based on the updated sequence.

- 5This iterative process continues until the model reaches a predefined maximum sequence length, such as 2048 tokens, or generates an end-of-sequence token.

The KV cache here is used in this inference process as explain above. So as we can see, it is important to have efficient management of the KV cache for high throughput LLM serving.

Memory waste in previous systems

Looking at the layout of memory in older systems, we can identify 3 main issues.

- We have internal fragmentation - We don't know in advance how many tokens the model will generate.

- Reservation - its not used right now but will be used in the future.

- External fragmentation - Different requests A and B may have different sequence lengths.

According to tests by the authors, only 20% - 40% of KV cache is utilized to store token states and the rest is wasted.

vLLM solves this issue by introducing a new method of memory management similar to the tried and true paging and virtual memory techinques used in OSs called Paged Memory.

Paged Memory

vLLM addresses this problem by applying ideas that have existed in operating systems for decades, specifically paging and virtual memory.

Instead of allocating one large contiguous KV cache per request, vLLM partitions the KV cache into fixed-size blocks called KV blocks. Each block stores the Key and Value states for a fixed number of tokens.

vLLM introduces a level of indirection between logical and physical KV blocks.

- Logical KV blocks represent the sequence order of tokens for a request.

- Physical KV blocks represent actual memory locations on the GPU.

The mapping between logical and physical blocks is maintained in a block table, analogous to a page table in an operating system.

This design allows logical token order to be preserved even when physical memory is fragmented.

These blocks are allocated on demand rather than being pre-allocated upfront.

Paged Attention

Paged Attention introduces a different approach to managing attention memory by abandoning the assumption that all Key Value states must be stored in a single contiguous block of memory. Traditional attention implementations rely on contiguous KV storage, which works well when input and output lengths are known and static. However, this approach becomes highly inefficient for LLM inference, where sequence lengths are dynamic and unpredictable.

To address this, Paged Attention partitions the KV cache into fixed-size blocks and virtualizes them into logical and physical views. This design decouples the logical token order from the physical memory layout and enables on-demand memory allocation.

For example, consider a request with the prompt: "The ".

- The logical KV blocks represent the token sequence in order, with tokens mapped to consecutive logical blocks to preserve their natural order.

- The Physical KV blocks represent actual memory locations, which may be allocated in any order and are not required to be contiguous.

- A block table maintains the mapping between logical KV blocks and physical KV blocks, functioning similarly to a page table in an operating system.

- When the final logical block becomes full, a new physical block is allocated and mapped on demand, rather than being pre-allocated upfront as in traditional systems.

This block-based and virtualized design allows KV cache memory to grow dynamically with the sequence length while avoiding the inefficiencies of large contiguous allocations.

Memory efficiency of paged attention

Paged Attention significantly improves memory efficiency by minimizing both internal and external fragmentation.

- Internal fragmentation is limited to the final block of each sequence, since all preceding blocks are fully utilized. The amount of wasted space per sequence is therefore bounded by the block size. In practice, block sizes of 16 or 32 tokens are commonly used, which is orders of magnitude smaller than typical sequence lengths. As a result, internal fragmentation is negligible.

- External fragmentation is completely eliminated because all KV blocks are of fixed size. This uniformity allows memory to be allocated and reused efficiently without gaps caused by variable-length allocations.

Data collected by the authors show that KV cache space is reduced to less than 4%, representing a 3x–5x improvement over prior systems. This enables larger batch sizes and directly leads to higher throughput during LLM inference.